The adaptive SAT (Scholastic Aptitude Test) exam was announced in January 2022 by the College Board, with the goal to modernize the test and make it more widely available, migrating the exam from paper-and-pencil to computerized delivery. Moreover, it would make the tests “adaptive.” But what does it mean to have an adaptive SAT? How does adaptive testing work, and why does it make tests more secure, efficient, accurate, and fair?

Click here to take an example Adaptive SAT (Math)

What is the SAT?

The SAT is the most commonly used exam for university admissions in the United States, though the ACT ranks a close second. Decades of research has shown that it accurately predicts important outcomes, such as 4-year graduation rates or GPA. Moreover, it provides incremental validity over other predictors, such as High School GPA. The adaptive SAT exam will use algorithms to make the test shorter, smarter, and more accurate.

The new version of the SAT has 3 sections: Math, Reading, and Writing/Language. These are administered separately from a psychometric perspective. The Reading section tests comprehension and analysis through passages from literature, historical documents, social sciences, and natural sciences. The Writing and Language section evaluates grammar, punctuation, and editing skills, asking students to improve sentence structure and word choice. The Math section covers algebra, problem-solving, data analysis, and some advanced math topics, divided into calculator and no-calculator portions. Each section measures critical thinking and problem-solving abilities, contributing to the overall score. The optional Essay was removed in 2021.

Digital Assessment

The new SAT with adaptive testing is being called the “Digital SAT” by the College Board. Digital assessment, also known as electronic assessment or computer-based testing, refers to the delivery of exams via computers. It’s sometimes called online assessment or internet-based assessment as well, but not all software platforms are online, some stay secure on LANs.

What is “adaptive”?

When a test is adaptive, it means that it is being delivered with a computer algorithm that will adjust the difficulty of questions based on an individual’s performance. If you do well, you get tougher items. If you do not do well, you get easier items.

But while this seems straightforward and logical on the surface, there is a host of technical challenges to this. And, as researchers have delved into those challenges over the past 50 years, they have developed several approaches to how the adaptive algorithm can work.

- Adapt the difficulty after every single item

- Adapt the difficulty in blocks of items (sections), aka MultiStage Testing

- Adapt the test in entirely different ways (e.g., decision trees based on machine learning models, or cognitive diagnostic models)

There are plenty of famous exams which use the first approach, including the NWEA MAP test and the Graduate Management Admissions Test (GMAT). But the SAT uses the second approach. There are several reasons to do so, an important one of which is that it allows you to use “testlets” which are items that are grouped together. For example, you probably remember test questions that have a reading passage with 3-5 attached questions; well, you can’t do that if you are picking a new standalone item after every item, as with Approach #1.

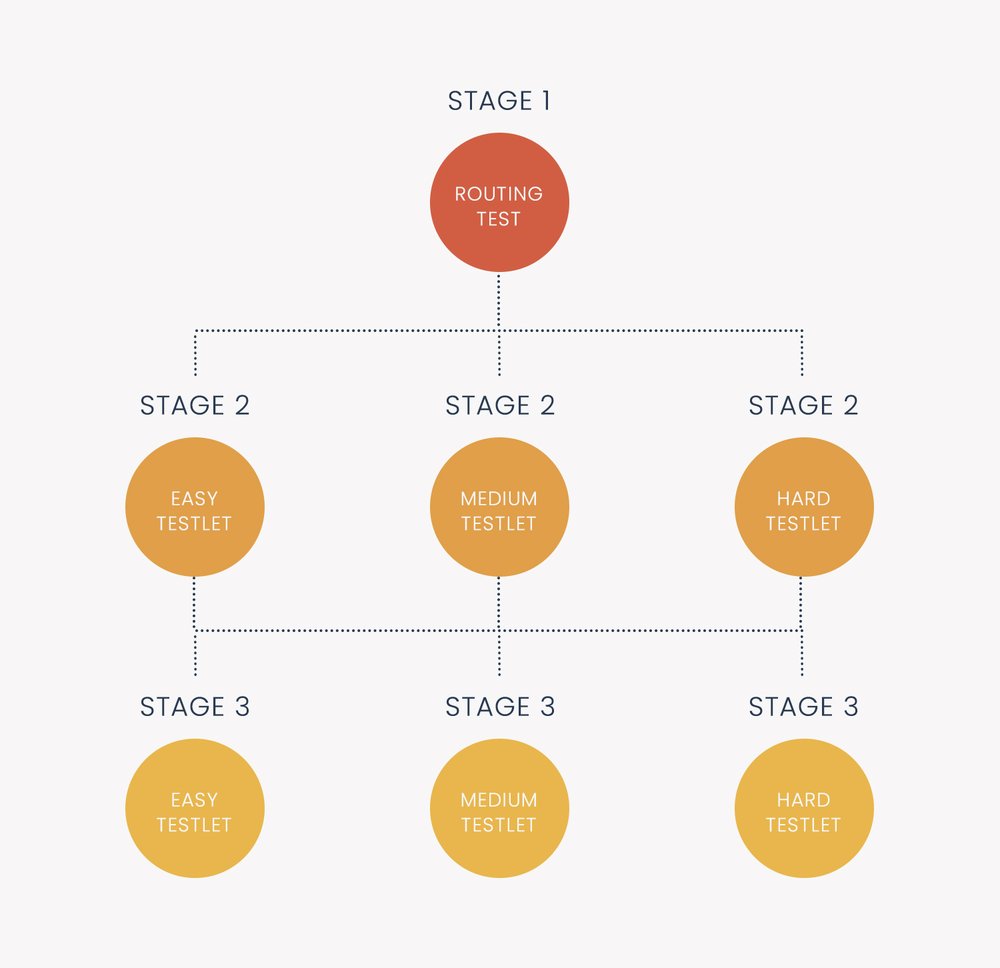

So how does it work? Each Adaptive SAT subtest will have two sections. An examinee will finish Section 1, and then based on their performance, get a Section 2 that is tailored to them. It’s not like it is just easy vs hard, either; there might be 30 possible Section 2s (10 each of Easy, Medium, Hard), or variations in between. A depiction of a 3-stage test is to the right.

How do we fairly score the results if students receive different questions? That issue has long been addressed by item response theory. Examinees are scored with a complex machine learning model which takes into account not just how many items they got correct, but which items, and how difficult or high-quality those items are. This is nothing new; it has been used by many large-scale assessments since the 1980s.

If you want to delve deeper into learning about adaptive algorithms, here is a detailed article.

Why an adaptive SAT?

The decades of research have shown adaptive testing to have well-known benefits. It requires fewer items to achieve the same level of accuracy in scores, which means shorter exams for everyone. It is also more secure, because not everyone sees the same items in the same order. It can produce a more engaging assessment as well, keeping the top performers challenged and avoid the lower performers checking out after getting too frustrated by difficult items. And, of course, using digital assessment has many advantages itself, such as faster score turnaround and enabling the use of tech-enhanced items. So, the migration to an adaptive SAT on top of being digital will be beneficial for the students.

Nathan Thompson earned his PhD in Psychometrics from the University of Minnesota, with a focus on computerized adaptive testing. His undergraduate degree was from Luther College with a triple major of Mathematics, Psychology, and Latin. He is primarily interested in the use of AI and software automation to augment and replace the work done by psychometricians, which has provided extensive experience in software design and programming. Dr. Thompson has published over 100 journal articles and conference presentations, but his favorite remains https://scholarworks.umass.edu/pare/vol16/iss1/1/ .