Multidimensional item response theory (MIRT) has been developing from its Factor Analytic and unidimensional item response theory (IRT) roots. This development has led to an increased emphasis on precise modeling of item-examinee interaction and a decreased emphasis on data reduction and simplification. MIRT represents a broad family of probabilistic models designed to portray an examinee’s likelihood of a correct response based on item parameters and multiple latent traits/dimensions. The MIRT models determine a compound multidimensional space to describe individual differences in the targeted dimensions.

Within MIRT framework, items are treated as fundamental units of test construction. Furthermore, items are considered as multidimensional trials to obtain valid and reliable information about examinee’s location in a complex space. This philosophy extends the work from unidimensional IRT to provide a more comprehensive description of item parameters and how the information from items combines to depict examinees’ characteristics. Therefore, items need to be crafted mindfully to be sufficiently sensitive to the targeted combinations of knowledge and skills, and then be carefully opted to help improve estimates of examinee’s characteristics in the multidimensional space.

Trigger for development of Multidimensional Item Response Theory

In modern psychometrics, IRT is employed for calibrating items belonging to individual scales so that each dimension is regarded as unidimensional. According to IRT models, an examinee’s response to an item depends solely on the item parameters and on the examinee’s single parameter, that is the latent trait θ. Unidimensional IRT models are advantageous in terms of operating with quite simple mathematical forms, having various fields of application, and being somewhat robust to violating assumptions.

However, there is a high probability that real interactions between examinees and items are far more jumbled than these IRT models imply. It is likely that responding to a specific item requires examinees to apply plentiful abilities and skills, especially in the compound areas such as the natural sciences. Thus, despite the fact that unidimensional IRT models are highly useful under specific conditions, the world of psychometrics faced the need for more sophisticated models that would reflect multiform examinee-item interactions. For that reason, unidimensional IRT models were extended to multidimensional models to become capable to express situations when examinees need multiple abilities and skills to respond to test items.

Categories of Multidimensional Item Response Theory models

There are two broad categories of MIRT models: compensatory and non-compensatory (partially compensatory).

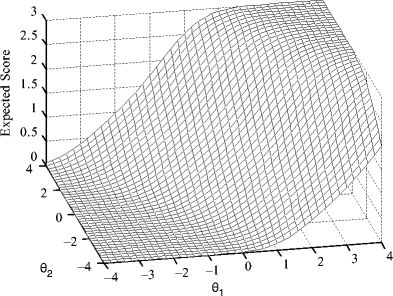

- Under the compensatory model, examinees’ abilities work in cooperation to escalate the probability of a correct response to an item, i.e. higher ability on one trait/dimension compensates for lower ability on the other. For instance, an examinee should read a passage on a current event and answer a question about it. This item assesses two abilities: reading comprehension and knowledge of current events. If the examinee is aware of the current event, then that will compensate for their lower reading ability. On the other hand, if the examinee is an excellent reader then their reading skills will compensate for lack of knowledge about the event.

- Under the non-compensatory model, abilities do not compensate each other, i.e. an examinee needs to possess a high level abilities on all traits/dimensions to have a high chance to respond to a test item correctly. For example, an examinee should solve a traditional mathematical word problem. This item assesses two abilities: reading comprehension and mathematical computation. If the examinee has excellent reading ability but low mathematical computation ability, they will be able to read the text but not be able to solve the problem. Possessing reverse abilities, the examinee will not be able to solve the problem without understanding what is being asked.

Within the literature, compensatory MIRT models are more commonly used.

Applications of Multidimensional Item Response Theory

- Since MIRT analyses concentrate on the interaction between item parameters and examinee characteristics, they have provoked numerous studies of skills and abilities necessary to give a correct answer to an item, and of sensitivity dimensions for test items. This research area demonstrates the importance of a thorough comprehension of the ways that tests function. MIRT analyses can help verify group differences and item sensitivities that facilitate test and item bias, and define the reasons behind differential item functioning (DIF) statistics.

- MIRT allows linking of calibrations, i.e. putting item parameter estimates from multiple calibrations into the same multidimensional coordinate system. This enables reporting examinee performance on different sets of items as profiles on multiple dimensions located on the same scales. Thus, MIRT makes it possible to create large pools of calibrated items that can be used for the construction of multidimensionally parallel test forms and computerized adaptive testing (CAT).

Conclusion

Given the complexity of the constructs in education and psychology and the level of details provided in test specifications, MIRT is particularly relevant for investigating how individuals approach their learning and, subsequently, how it is influenced by various factors. MIRT analysis is still at an early stage of its development and hence is a very active area of current research, in particular of CAT technologies. Interested readers are referred to Reckase (2009) for more detailed information about MIRT.

References

Reckase, M. D. (2009). Multidimensional Item Response Theory. Springer.

Laila Issayeva earned her BA in Mathematics and Computer Science at Aktobe State University and Master’s in Education at Nazarbayev University. She has experience as a math teacher, school leader, and as a project manager for the implementation of nationwide math assessments for Kazakhstan. She is currently pursuing a PhD in psychometrics.