The two terms Norm-Referenced and Criterion-Referenced are commonly used to describe tests, exams, and assessments. They are often some of the first concepts learned when studying assessment and psychometrics. Norm-referenced means that we are referencing how your score compares to other people. Criterion-referenced means that we are referencing how your score compares to a criterion such as a cutscore or a body of knowledge. Test scaling is integral to both types of assessments, as it involves adjusting scores to facilitate meaningful comparisons.

Do we say a test is “Norm-Referenced” vs. “Criterion-Referenced”?

Actually, that’s a slight misuse.

The terms Norm-Referenced and Criterion-Referenced refer to score interpretations. Most tests can actually be interpreted in both ways, though they are usually designed and validated for only one of the other. More on that later.

Hence the shorthand usage of saying “this is a norm-referenced test” even though it just means that it is the primarily intended interpretation.

Examples of Norm-Referenced vs. Criterion-Referenced

Suppose you received a score of 90% on a Math exam in school. This could be interpreted in both ways. If the cutscore was 80%, you clearly passed; that is the criterion-referenced interpretation. If the average score was 75%, then you performed at the top of the class; this is the norm-referenced interpretation. Same test, both interpretations are possible. And in this case, valid interpretations.

What if the average score was 95%? Well, that changes your norm-referenced interpretation (you are now below average) but the criterion-referenced interpretation does not change.

Now consider a certification exam. This is an example of a test that is specifically designed to be criterion-referenced. It is supposed to measure that you have the knowledge and skills to practice in your profession. It doesn’t matter whether all candidates pass or only a few candidates pass; the cutscore is the cutscore.

However, you could interpret your score by looking at your percentile rank compared to other examinees; it just doesn’t impact the cutscore

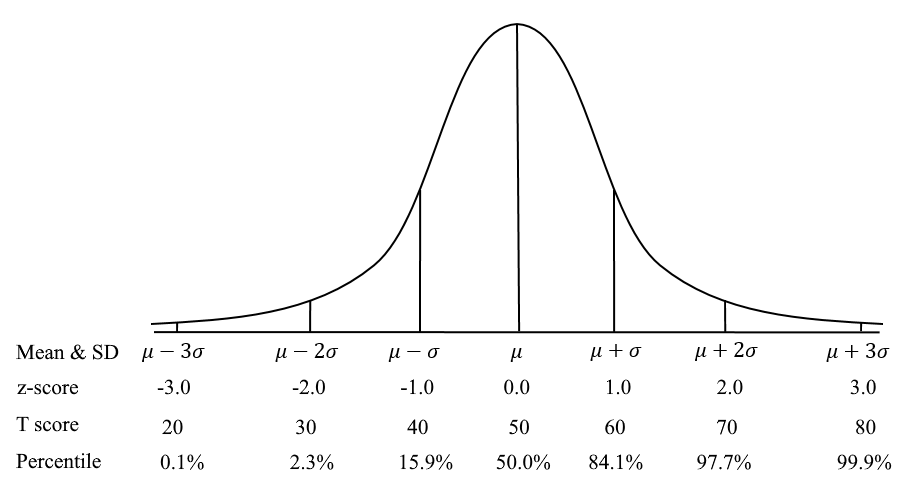

On the other hand, we have an IQ test. There is no criterion-referenced cutscore of whether you are “smart” or “passed.” Instead, the scores are located on the standard normal curve (mean=100, SD=15), and all interpretations are norm-referenced. Namely, where do you stand compared to others? The scales of the T score and z-score are norm-referenced, as are Percentiles. So are many tests in the world, like the SAT with a mean of 500 and SD of 100.

Is this impacted by item response theory (IRT)?

If you have looked at item response theory (IRT), you know that it scores examinees on what is effectively the standard normal curve (though this is shifted if Rasch). But, IRT-scored exams can still be criterion-referenced. It can still be designed to measure a specific body of knowledge and have a cutscore that is fixed and stable over time.

Even computerized adaptive testing can be used like this. An example is the NCLEX exam for nurses in the United States. It is an adaptive test, but the cutscore is -0.18 (NCLEX-PN on Rasch scale) and it is most definitely criterion-referenced.

Building and validating an exam

The process of developing a high-quality assessment is surprisingly difficult and time-consuming. The greater the stakes, volume, and incentives for stakeholders, the more effort that goes into developing and validating. ASC’s expert consultants can help you navigate these rough waters.

Want to develop smarter, stronger exams?

Contact us to request a free account in our world-class platform, or talk to one of our psychometric experts.

Nathan Thompson earned his PhD in Psychometrics from the University of Minnesota, with a focus on computerized adaptive testing. His undergraduate degree was from Luther College with a triple major of Mathematics, Psychology, and Latin. He is primarily interested in the use of AI and software automation to augment and replace the work done by psychometricians, which has provided extensive experience in software design and programming. Dr. Thompson has published over 100 journal articles and conference presentations, but his favorite remains https://scholarworks.umass.edu/pare/vol16/iss1/1/ .