A modified-Angoff method study is one of the most common ways to set a defensible cutscore on an exam. It therefore means that the pass/fail decisions made by the test are more trustworthy than if you picked an arbitrary round number like 70%. If your doctor, lawyer, accountant, or other professional has passed an exam where the cutscore has been set with this method, you can place more trust in their skills.

What is the Angoff method?

The Angoff method is a scientific way of setting a cutscore (pass point) on a test. If you have a criterion-referenced interpretation, it is not legally defensible to just conveniently pick a round number like 70%; you need a formal process. There are a number of acceptable methodologies in the psychometric literature for standard-setting studies, also known as cutscores or passing points. Some examples include Angoff, modified-Angoff, Bookmark, Contrasting Groups, and Borderline. The modified-Angoff approach is by far the popular approach. It is used especially frequently for certification, licensure, certificate, and other credentialing exams.

It was originally suggested as a mere footnote by renowned researcher William Angoff, at Educational Testing Service. Studies found that panelists involved in modified-Angoff sessions typically demonstrate high agreement levels, with inter-rater reliability often surpassing 0.85, showcasing its robustness in decision consistency

How does the Angoff approach work?

First, you gather a group of subject matter experts (SMEs), with a minimum of 6, though 8-10 is preferred for better reliability, and have them define what they consider to be a Minimally Competent Candidate (MCC). Next, you have them estimate the percentage of minimally competent candidates that will answer each item correctly. You then analyze the results for outliers or inconsistencies. If experts disagree, you will need to evaluate inter-rater reliability and agreement, and after that have the experts discuss and re-rate the items to gain better consensus. The average final rating is then the expected percent-correct score for a minimally competent candidate.

Advantages of the Angoff method

- It is defensible. Because it is the most commonly used approach and is widely studied in the scientific literature, it is well-accepted.

- You can implement it before a test is ever delivered. Some other methods require you to deliver the test to a large sample first.

- It is conceptually simple, easy enough to explain to non-psychometricians.

- It incorporates the judgment of a panel of experts, not just one person or a round number.

- It works for tests with both classical test theory and item response theory.

- It does not take long to implement – if a short test, it can be done in a matter of hours!

- It can be used with different item types, including polytomously scored items (multi-points).

Disadvantages of the Angoff method

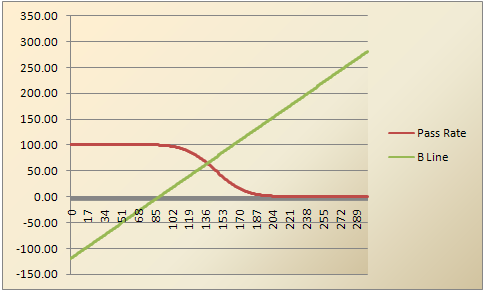

- It does not use actual data, unless you implement the Beuk method alongside.

- It can lead to the experts overestimating the performance of entry-level candidates, as they forgot what it was like to start out 20-30 years ago. This is one reason to use the Beuk method as a “reality check” by showing the experts that if they stay with the cutscore they just picked, the majority of candidates might fail!

Example of the Modified-Angoff Approach

First of all, do not expect a straightforward, easy process that leads to an unassailably correct cutscore. All standard-setting methods involve some degree of subjectivity. The goal of the methods is to reduce that subjectivity as much as possible. Some methods focus on content, others on examinee performance data, while some try to meld the two.

Step 1: Prepare Your Team

The modified-Angoff process depends on a representative sample of SMEs, usually 6-20. By “representative” I mean they should represent the various stakeholders. For instance, a certification for medical assistants might include experienced medical assistants, nurses, and physicians, from different areas of the country. You must train them about their role and how the process works, so they can understand the end goal and drive toward it.

Step 2: Define The Minimally Competent Candidate (MCC)

This concept is the core of the modified-Angoff method, though it is known by a range of terms or acronyms, including minimally qualified candidates (MQC) or just barely qualified (JBQ). The reasoning is that we want our exam to separate candidates that are qualified from those that are not. So we ask the SMEs to define what makes someone qualified (or unqualified!) from a perspective of skills and knowledge. This leads to a conceptual definition of an MCC. We then want to estimate what score this borderline candidate would achieve, which is the goal of the remainder of the study. This step can be conducted in person, or via webinar.

Step 3: Round 1 Ratings

Next, ask your SMEs to read through all the items on your test form and estimate the percentage of MCCs that would answer each correctly. A rating of 100 means the item is a slam dunk; it is so easy that every MCC would get it right. A rating of 40 is very difficult. Most ratings are in the 60-90 range if the items are well-developed. The ratings should be gathered independently; if everyone is in the same room, let them work on their own in silence. This can easily be conducted remotely, though.

Step 4: Discussion

This is where it gets fun. Identify items where there is the most disagreement (as defined by grouped frequency distributions or standard deviation) and make the SMEs discuss it. Maybe two SMEs thought it was super easy and gave it a 95 and two other SMEs thought it was super hard and gave it a 45. They will try to convince the other side of their folly. Chances are that there will be no shortage of opinions and you, as the facilitator, will find your greatest challenge is keeping the meeting on track. This step can be conducted in person, or via webinar.

Step 5: Round 2 Ratings

Raters then re-rate the items based on the discussion. The goal is that there will be a greater consensus. In the previous example, it’s not likely that every rater will settle on a 70. But if your raters all end up from 60-80, that’s OK. How do you know there is enough consensus? We recommend the inter-rater reliability suggested by Shrout and Fleiss (1979), as well as looking at inter-rater agreement and dispersion of ratings for each item. This use of multiple rounds is known as the Delphi approach; it pertains to all consensus-driven discussions in any field, not just psychometrics.

Step 6: Evaluate Results and Final Recommendation

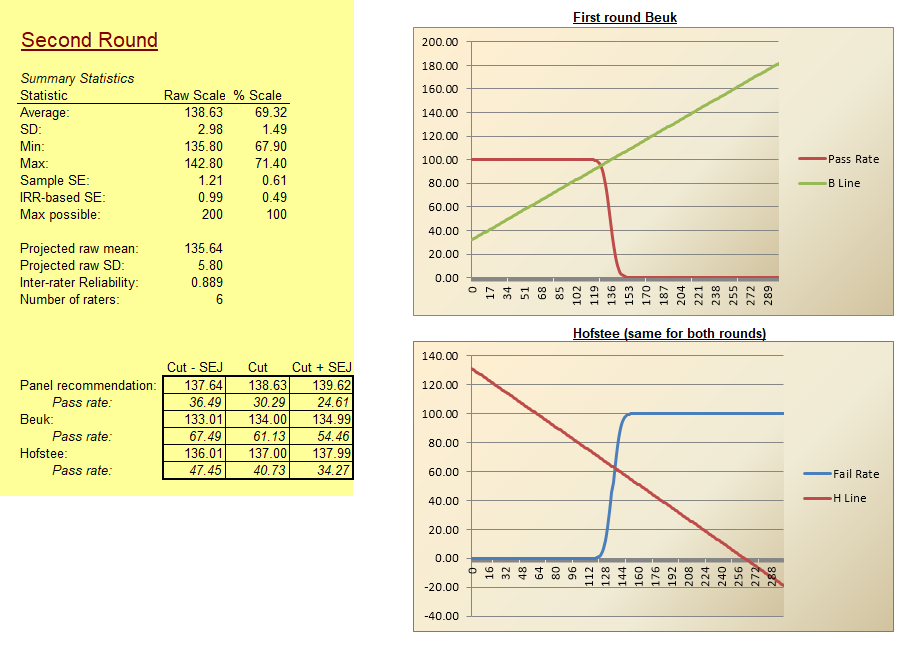

Evaluate the results from Round 2 as well as Round 1. An example of this is below. What is the recommended cutscore, which is the average or sum of the Angoff ratings depending on the scale you prefer? Did the reliability improve? Estimate the mean and SD of examinee scores (there are several methods for this). What sort of pass rate do you expect? Even better, utilize the Beuk Compromise as a “reality check” between the modified-Angoff approach and actual test data. You should take multiple points of view into account, and the SMEs need to vote on a final recommendation. They, of course, know the material and the candidates so they have the final say. This means that standard setting is a political process; again, reduce that effect as much as you can.

Some organizations do not set the cutscore at the recommended point, but at one standard error of judgment (SEJ) below the recommended point. The SEJ is based on the inter-rater reliability; note that it is NOT the standard error of the mean or the standard error of measurement. Some organizations use the latter; the former is just plain wrong (though I have seen it used by amateurs).

Step 7: Write Up Your Report

Validity refers to evidence gathered to support test score interpretations. Well, you have lots of relevant evidence here. Document it. If your test gets challenged, you’ll have all this in place. On the other hand, if you just picked 70% as your cutscore because it was a nice round number, you could be in trouble.

Additional Topics

In some situations, there are more issues to worry about. Multiple forms? You’ll need to equate in some way. Using item response theory? You’ll have to convert the cutscore from the modified-Angoff method onto the theta metric using the Test Response Function (TRF). New credential and no data available? That’s a real chicken-and-egg problem there.

Where Do I Go From Here?

Ready to take the next step and actually apply the modified-Angoff process to improving your exams? Sign up for a free account in our FastTest item banker. You can also download our Angoff analysis tool for free.

References

Shrout, P. E., & Fleiss, J. L. (1979). Intraclass correlations: uses in assessing rater reliability. Psychological bulletin, 86(2), 420.

Nathan Thompson earned his PhD in Psychometrics from the University of Minnesota, with a focus on computerized adaptive testing. His undergraduate degree was from Luther College with a triple major of Mathematics, Psychology, and Latin. He is primarily interested in the use of AI and software automation to augment and replace the work done by psychometricians, which has provided extensive experience in software design and programming. Dr. Thompson has published over 100 journal articles and conference presentations, but his favorite remains https://scholarworks.umass.edu/pare/vol16/iss1/1/ .