The IRT Item Difficulty Parameter

The item difficulty parameter from item response theory (IRT) is both a shape parameter of the item response function (IRF) but also an important way to evaluate the performance of an item in a test.

Item Parameters and Models in IRT

There are three item parameters estimated under dichotomous IRT: the item difficulty (b), the item discrimination (a), and the pseudo-guessing parameter (c). IRT is actually a family of models, the most common of which are the dichotomous 1-parameter, 2-parameter, and 3-parameter logistic models (1PL, 2PL, and 3PL). The key parameter that is utilized in all three IRT models is the item difficulty parameter, b. The 3PL uses all three, the 2PL uses a and b, and the 1PL/Rasch uses only b.

Interpreting the IRT item difficulty parameter

The b parameter is an index of how difficult the item is, or the construct level at which we would expect examinees to have a probability of 0.50 (assuming no guessing) of getting the keyed item response. It is worth reminding, that in IRT we model the probability of a correct response on a given item Pr (X) as a function of examinee ability (θ) and certain properties of the item itself. This function is called item response function (IRF) or item characteristic curve (ICC), and it is the basic feature of IRT since all the other constructs depend on this curve.

The IRF plots the probability that an examinee will respond correctly to an item as a function of a latent trait θ. The probability of a correct response is a result of the interaction between the examinees’ ability θ and the item difficulty parameter b. With the IRF, as the θ increases, there is a rise in probability that the examinee will provide a correct response to an item. The b parameter is a location index that indicates the position of the item functions on the ability scale, showing how difficult or easy a specific item is. The higher the b parameter is, the higher the ability required from an examinee to have a 50% chance of getting an item correctly. Difficult items are located to the right or to the higher end of the ability scale while easier items are located to the left or to the lower end of the ability scale. The typical values of the item difficulty range from −3 to +3, items whose b values are near −3 will correspond to items that are very easy, whilst items with values near +3 will correspond to the items that are very difficult for the examinees.

You can interpret the b parameters as a sort of “z-score for the item.” If the value is -1.0, that means it is appropriate for examinees at a score of -1.0 (15th percentile).

The b parameter interpretation for difficulty is the opposite of the item difficulty statistic p-value in classical test theory (CTT), where a low b indicates an easy item, and a high b indicates a difficult item. Obviously, higher b require higher θ for a correct response. With the CTT p-value, a low value is hard and a high value is easy. For this reason it is sometimes called item facility.

Examples of the IRT item difficulty parameter

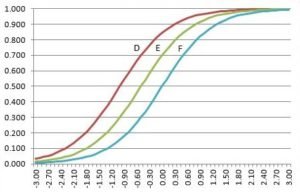

Let’s consider an example. There are three IRFs below for three different items D, E, and F. All three items have the same level of discrimination but different item difficulty values on the ability scale. In the 1PL, it is assumed that the only characteristic that influences examinee performance is the item difficulty (b parameter) and all items are equally discriminating. The b-values for the items D, E, and F are −0.5, 0.0, and 1.0 respectively. Item D is quite an easy item. Item E represents an item of medium difficulty such that the probability of a correct response is low at the lowest ability levels and near 1 at the highest ability levels. Item F introduces a hard item with the probability of correctly responding examinees being low along the most of the ability scale and only increasing at the higher ability levels.

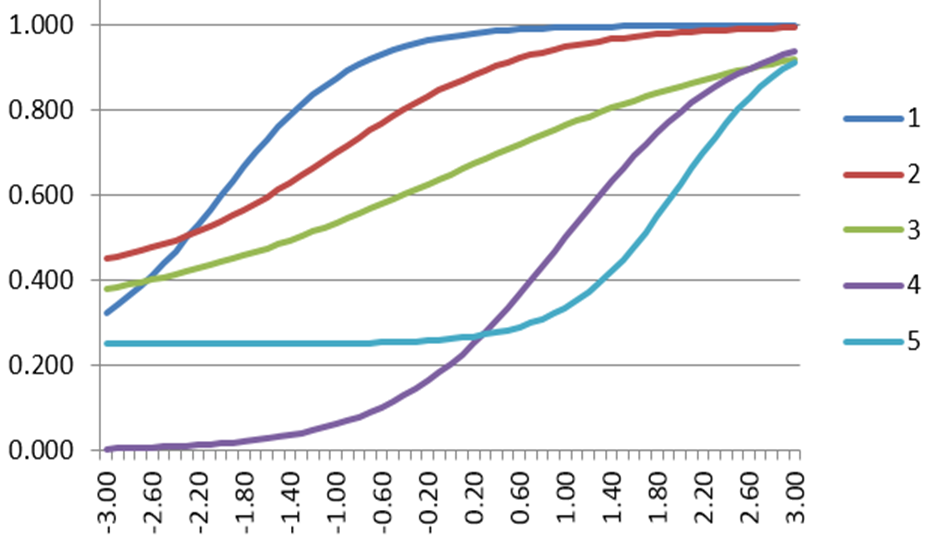

Look at the five IRFs below and check whether you are able to compare the items in terms of their difficulty. Below are some specific questions and answers for comparing the items.

- Which item is the hardest, requiring the highest ability level, on average, to get it correct?

Blue (No 5), as it is the furthest to the right.

- Which item is the easiest?

Dark blue (No 1), as it is the furthest to the left.

How do I calculate the IRT item difficulty?

You’ll need special software like Xcalibre. Download a copy for free here.

Laila Issayeva M.Sc.

Latest posts by Laila Issayeva M.Sc. (see all)

- What is Digital Assessment, aka e-Assessment? - May 18, 2024

- What is a z-score? - November 15, 2023

- Digital Badges - May 29, 2023