Nathan Thompson articles

What is Automated Essay Scoring?

Automated essay scoring (AES) is an important application of machine learning and artificial intelligence to the field of psychometrics and assessment. In fact, it’s been around far longer than “machine learning” and “artificial intelligence” have

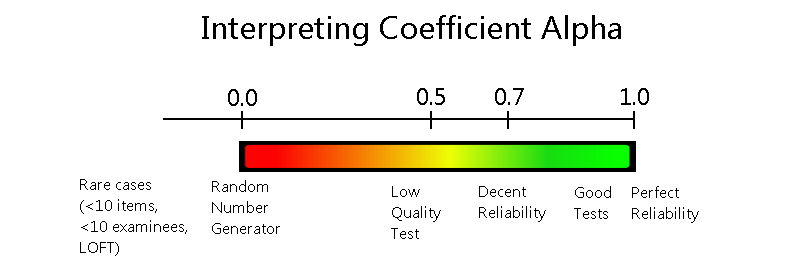

Coefficient Alpha Reliability Index

Coefficient alpha reliability, sometimes called Cronbach’s alpha, is a statistical index that is used to evaluate the internal consistency or reliability of an assessment. That is, it quantifies how consistent we can expect scores to

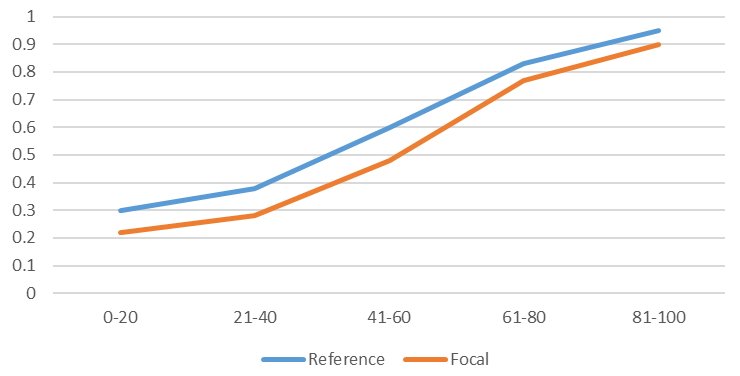

Differential Item Functioning (DIF)

Differential item functioning (DIF) is a term in psychometrics for the statistical analysis of assessment data to determine if items are performing in a biased manner against some group of examinees. This analysis is often

“Dichotomous” Vs “Polytomous” in IRT?

What is the difference between the terms dichotomous and polytomous in psychometrics? Well, these terms represent two subcategories within item response theory (IRT) which is the dominant psychometric paradigm for constructing, scoring and analyzing assessments.

How do I develop a test security plan?

A test security plan (TSP) is a document that lays out how an assessment organization address security of its intellectual property, to protect the validity of the exam scores. If a test is compromised, the

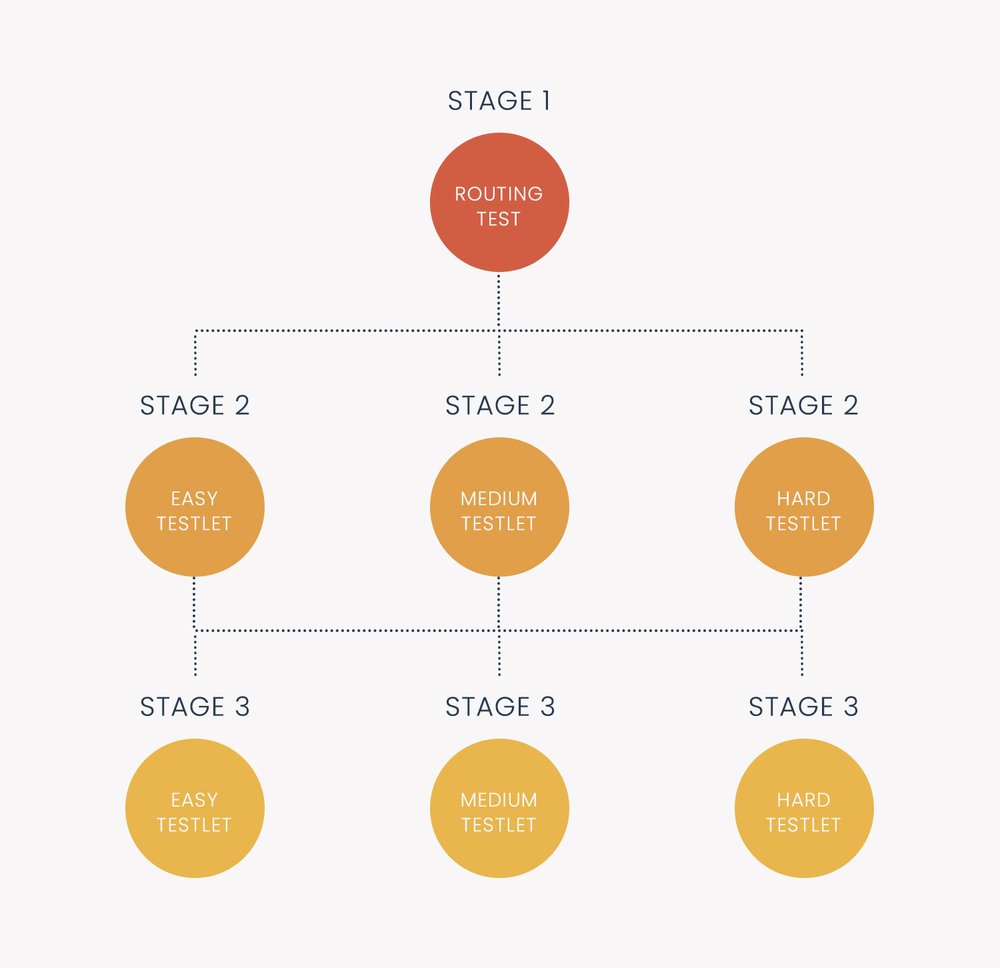

Multistage Testing

Multistage testing (MST) is a type of computerized adaptive testing (CAT). This means it is an exam delivered on computers which dynamically personalize it for each examinee or student. Typically, this is done with respect

Automated Item Generation

Automated item generation (AIG) is a paradigm for developing assessment items (test questions), utilizing principles of artificial intelligence and automation. As the name suggests, it tries to automate some or all of the effort involved

Ebel Method of Standard Setting

The Ebel method of standard setting is a psychometric approach to establish a cutscore for tests consisting of multiple-choice questions. It is usually used for high-stakes examinations in the fields of higher education, medical and health

Distractor Analysis for Test Items

Distractor analysis refers to the process of evaluating the performance of incorrect answers vs the correct answer for multiple choice items on a test. It is a key step in the psychometric analysis process to

What is multi-modal test delivery?

Multi-modal test delivery refers to an exam that is capable of being delivered in several different ways, or of a online testing software platform designed to support this process. For example, you might provide the

Confidence Interval for Test Scores

A confidence interval for test scores is a common way to interpret the results of a test by phrasing it as a range rather than a single number. We all understand that tests provide imperfect

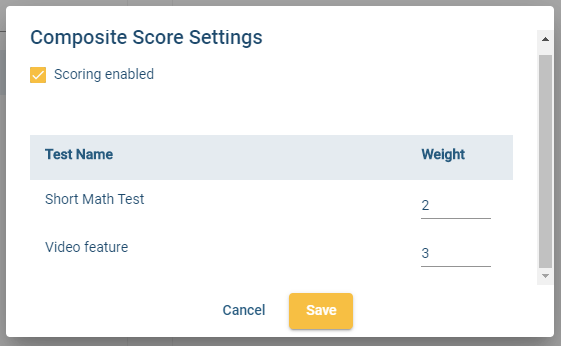

Composite Test Score

A composite test score refers to a test score that is combined from the scores of multiple tests, that is, a test battery. The purpose is to create a single number that succinctly summarizes examinee

Inter-Rater Reliability vs Agreement

Inter-rater reliability and inter-rater agreement are important concepts in certain psychometric situations. For many assessments, there is never any encounter with raters, but there certainly are plenty of assessments that do. This article will define

Split Half Reliability Index

Split Half Reliability is an internal consistency approach to quantifying the reliability of a test, in the paradigm of classical test theory. Reliability refers to the repeatability or consistency of the test scores; we definitely

What is a Cutscore or Passing Point?

A cutscore or passing point (aka cut-off score and cutoff score as well) is a score on a test that is used to categorize examinees. The most common example of this is pass/fail, which we

Nedelsky Method of Standard Setting

The Nedelsky method is an approach to setting the cutscore of an exam. Originally suggested by Nedelsky (1954), it is an early attempt to implement a quantitative, rigorous procedure to the process of standard setting.

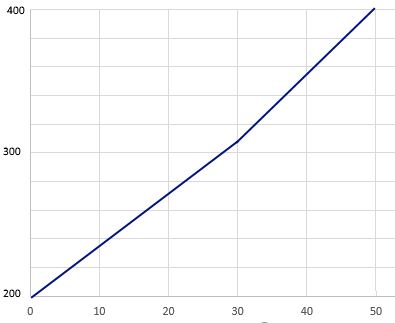

What is Scaled Scoring on a Test?

There are 4 types of scaled scoring. The rest of this post will get into some psychometric details on these, for advanced readers. Normal/standardized This is an approach to scaled scoring that many of us

What are Enemy Items?

Enemy items is a psychometric term that refers to two test questions (items) which should not be on the same test form (if linear) seen by a given examinee (if LOFT or adaptive). This can